When Your Vendor Becomes the Weak Spot — What Happened with OpenAI and Mixpanel

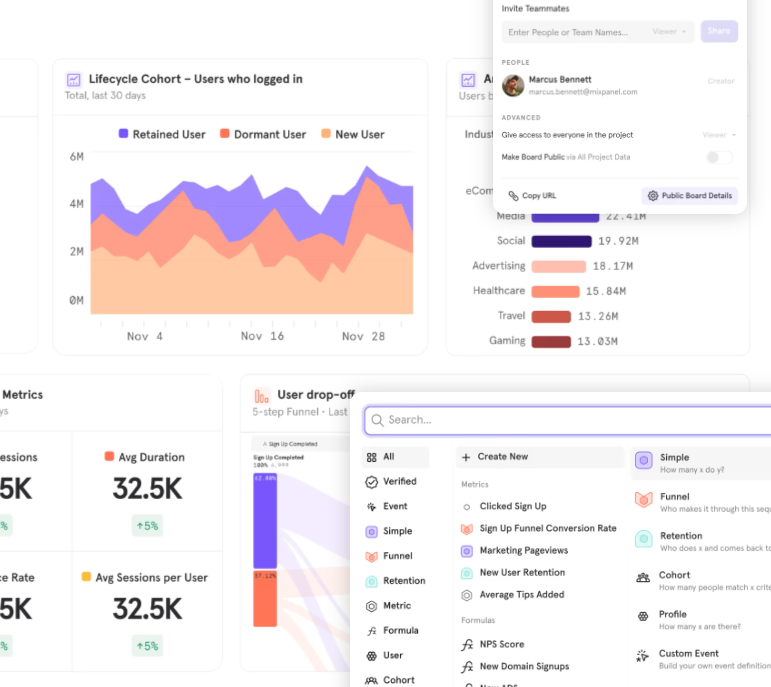

Late on November 25, 2025, OpenAI revealed a data-security incident that did not originate on its own infrastructure — but involved a third-party analytics vendor, Mixpanel. Shared publicly, the announcement sparked concern across the developer community and renewed questions about third-party risk in AI and software services.

The breach traces back to November 9, when Mixpanel detected unauthorized access within parts of its systems. According to Mixpanel, the intrusion stemmed from a “smishing” campaign — a phishing-by-SMS tactic targeting employees.

On November 25, Mixpanel provided the relevant dataset to OpenAI. Soon after, OpenAI confirmed that the data exposed belonged to a subset of users of its API platform (platform.openai.com) rather than general users of services like ChatGPT.

What Data Was Exposed — And What Remained Safe

According to OpenAI’s disclosure, the exported dataset included “limited customer identifiable information and analytics information.” Specifically, the compromised data may have included:

- Names provided for API accounts

- Email addresses associated with those accounts

- Approximate location metadata derived from browser data (city, state, country)

- Operating system and browser details used to access the account

- Referring website information (i.e. which website led the user to the login)

- Organization or user IDs linked to accounts on the API platform

Importantly, what was not exposed includes far more sensitive data. According to OpenAI, no chat content, API usage logs, API requests, account passwords, credentials, payment details, or government IDs were part of the leaked data.

Because of that, OpenAI says it is not requiring user password resets or API key rotations.

OpenAI’s Response — Cutting Ties, Not Hiding Facts

Once the breach was disclosed, OpenAI took immediate action. It terminated its use of Mixpanel in production and began notifying affected organizations, admins, and individual users directly via email.

In public statements the company emphasized the incident did not arise from vulnerabilities in its own systems — rather, it was a supply-chain compromise.

OpenAI also pledged to review its vendor ecosystem more broadly, signaling that going forward it will raise security and privacy expectations for third-party partners.

Finally, as a precautionary measure, OpenAI recommended that affected users enable multi-factor authentication (MFA) if they had not already.

Why This Matters — Beyond Just OpenAI

To many, this breach reads like “not a big deal” — after all, no passwords, API keys, or sensitive financial or chat data were exposed. Yet from a security and privacy standpoint, it remains deeply concerning.

The leaked metadata — names, email addresses, locations, OS/browser details — could be weaponized for phishing or social-engineering attacks. If attackers combine this data with other publicly available info, they might craft credible spoofed messages that trick users into giving up credentials, clicking malicious links, or revealing 2FA codes. In other words: the attacker doesn’t always need passwords — often enough, convincing pretext is enough.

Moreover, this incident highlights a growing vulnerability inherent in complex software ecosystems: even if you harden your internal systems, your security can be undermined by third-party partners. With AI and SaaS platforms increasingly relying on analytics, telemetry, and third-party integrations, the “supply-chain” attack surface grows.

For developers, security professionals, and even end users, this breach should serve as a wake-up call. Vendor security — and the governance around third-party dependencies — must be treated with the same rigor as internal security.

What You Should Do If You Use OpenAI’s API (or Similar Services)

If you or your organization use OpenAI’s API or any service that relies on external analytics/telemetry providers:

Ensure multifactor authentication is enabled

Be on guard for phishing emails or social-engineering attempts that reference you by name, or sound legitimate — treat unexpected requests for credentials, 2FA codes, or attachments with skepticism

Review and minimize the metadata your application collects or shares with third-party vendors — avoid unnecessary tracking of user personal info if it isn’t required for core functionality

Evaluate vendors carefully for their security hygiene (incident response plans, employee training, resistance to social-engineering, logging & monitoring, etc.)

Have a plan in place for vendor compromise: know how to quickly suspend access, revoke credentials, notify users, and rotate secrets if needed

For all their promise, AI and developer-platform companies are not immune to the systemic risks of third-party systems. Protecting your data and your users means acknowledging those risks — and designing defensively from the start.